For years, I’ve been preaching the gospel of rigors database change control and the importance of automating who can make what changes to the database, and when. As part of my efforts to educate the public about database change control, automation, continuous delivery and DevOps, we hold regular webinars and demos, which are widely attended.

The Scenario

A few days ago, I was presenting at a live webinar on the importance of safe release automation for financial service enterprises. Towards the end of the webinar, I simulated a case of configuration drift in production due to a manual hotfix.

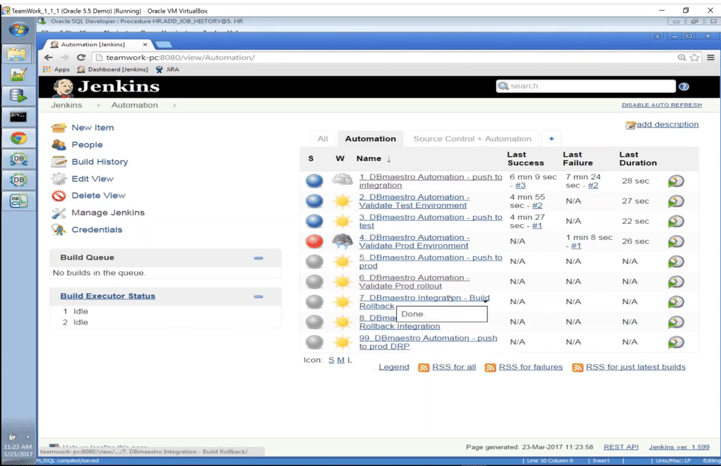

The idea was to demonstrate how DBmaestro would prevent me from pushing through the changes and overriding the hotfix.

According to my demo scenario I was supposed to manually undo the change, and then push the automated update forward successfully.

Uh-oh

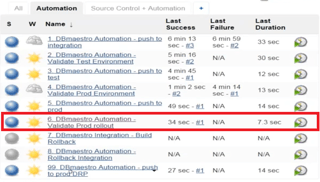

This is where I started running into problems. Because I had done this part manually, I failed to fully revert my changes. So, when I selected to run my automated pre-deployment validation, DBmaestro prevented me from going down that path. It prevented me from deploying over the drifted configuration, which in a real-world scenario, could potentially cause a costly downtime.

Because this was all happening in a live webinar with 150 people watching, I didn’t realize all this at the time. All I saw was that glaring red light and felt the urgency to keep things moving. Under this pressure, I moved on to demonstrate the next scenario and did not deploy to production.

After the webinar ended and I had some breathing room, I went back and looked at the warning messages and the audit trail we had logged. It was then that I realized the mistake was all mine.

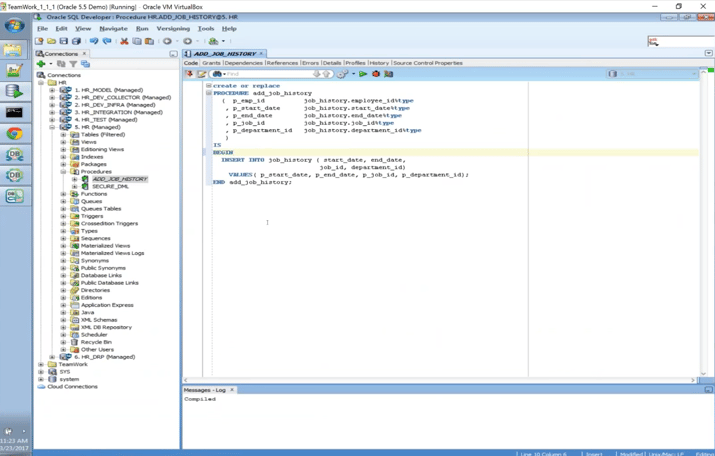

When I tried to revert the change, I had introduced into production I had only reverted part of it – the comment, but I failed to continue and revert the missing columns. Hence, I had not reverted to the correct configuration. For that reason, DBmaestro kept failing.

The Lesson

To me, there are a number of lessons here. Conducting a webinar in front of a large, live audience is comparable to working under the pressure of deploying changes to a large and critical database. I had played out this scenario dozens of times, but under these circumstances, I made a mistake. We are all humans and we can all make mistakes when doing something manually.

The way it turned out, the demo gods forced me to show live and in real-time, how downtime happens, and how automation and the correct database change control tool can prevent it.

It ended being a fantastic way to demonstrate the entire point of the webinar: when working manually, mistakes are unpreventable.

So how do we prevent downtime? We automate, we validate configuration, we enforce policy, we enforce security roles, and we audit.

In the scenario played out in this webinar, damage to production was prevented.