When creating an application or a database, it is crucial to take the way you approach agile database design into consideration from the outset in order to avoid issues down the road. Because change is bound to happen it is necessary to create a system that same as application source code will allow this to occur as smoothly as possible.

In order to have a scalable application it is important to have a good database design because the data model and the database design define the structure of the database, the relationships between the data sets, and is part of the foundation on which the application is built.

Application Source Code

It must be noted that database design is not the same as application source code. While both can act as blueprints for something that will be built and both can be changed over time, when source code of an application changes the entire application is rebuilt.

The existing instances are not simply modified, but are created anew. In contrast, database changes are applied directly to the instance. For this reason, application source code editing and maintenance methods will not work for a database design.

Likewise, while application source code only cares about the final state it ends up in, in database design each individual change must be considered, and each change must be noted. Every change to the database needs to be recorded individually, so that they can be applied to other instances of that database, and the final state of the database design itself should be recorded in the database model.

Furthermore, the only way changes should be made to an existing database instance is by changing the specific SQL statements. Changes cannot be partially implemented, meaning that it either is completed successfully or not at all and should be executed in the correct sequence for repeatability on behalf of any dependencies that exist between the changes.

Best Practices

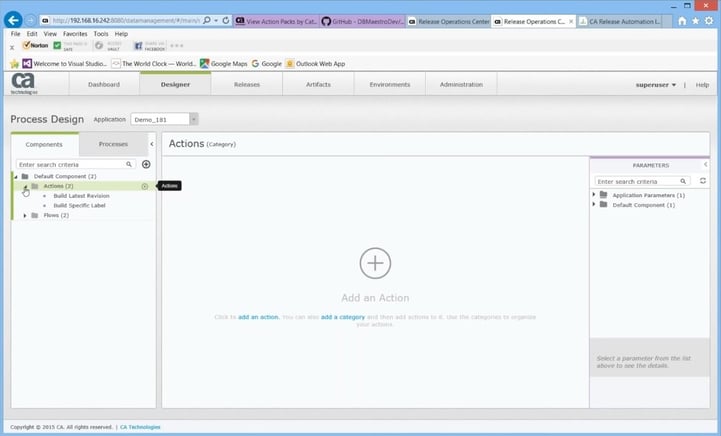

It is a best practice that anyone who wants to make a change to the agile database design must first file a request and these should be recorded somewhere to provide an audit trail. Changes should then be reviewed and approved by the data modeller, to ensure a good, scalable design.

The data modeller will also be responsible for updating the database model, which is the record of the database design, and producing the SQL statement that implements that change.

The above role can also get done through automation, causing the role of the database designer or data modeller to only be a full-time job during the first stage of development.

While all development cycles will involve the database designer, the role itself could be filled by someone else within the development cycle, like a developer or database administrator, because it is no longer as time consuming as it was during the outset.

Each database change should be given its own unique identifier, which identifies the particular change, regardless of the database instances it is applied to, or which versions of the application it appears in.

Each change to a particular database instance must be recorded within the database instance itself in order to avoid applying the same changes twice. This implies that you maintain some form of change history table within each database instance, which should include the date and time each change occurred.

The set of changes should be stored in a single change log, which includes the change identifier and the SQL statement that implemented that change allowing for all necessary changes to be easily located and sequenced.

This change log should be well structured so that a program can read and decode the changes in it, but there should be separate instances of the database design and the change log file in each version of the application source code so that changes can be made independently.

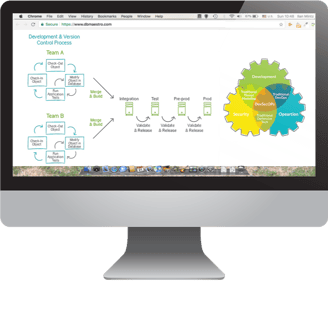

Database Changes

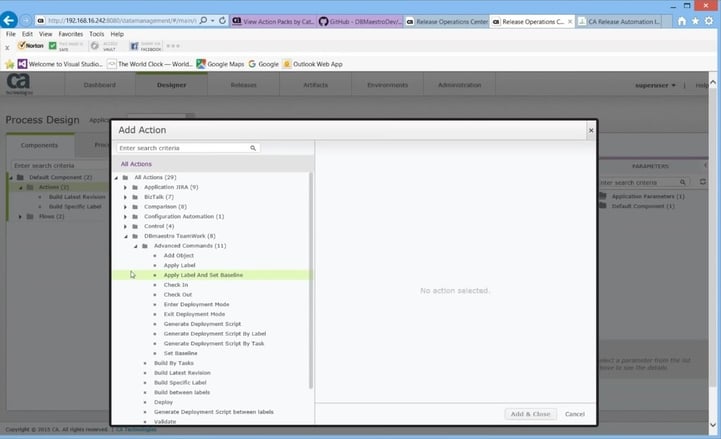

SQL statements are often specific to one database product, such as Oracle, Sybase, SQL Server or MySQL. The change log will need to record the separate SQL statements for each supported database product for each database change. Thus, each change that you make will have an identifier, and an SQL statement for database product that is clearly labelled with the database product name.

This whole solution is brought together in a program that is run during an application upgrade. It opens the change log XML file and reads in each change. If that change has not yet been applied to this particular database instance, then the corresponding SQL statement is executed and a record inserted into the Change History metadata table.

The importance of a well-made agile database design cannot be understated. While there are many best practices for developers, these are some specific tips that I have found to be useful. I believe that if you follow these tips you can ensure a scalable application that will run smoothly, despite the changes.

![[InfoGraphic] 10 Maxims to Guide the Agile CIO](https://www.dbmaestro.com/wp-content/wpsc-media/2017/02/agile-it-cio.webp)